Get started with Webhooks

Step-by-step instructions to ingest events via Webhooks to Propel.

Architecture

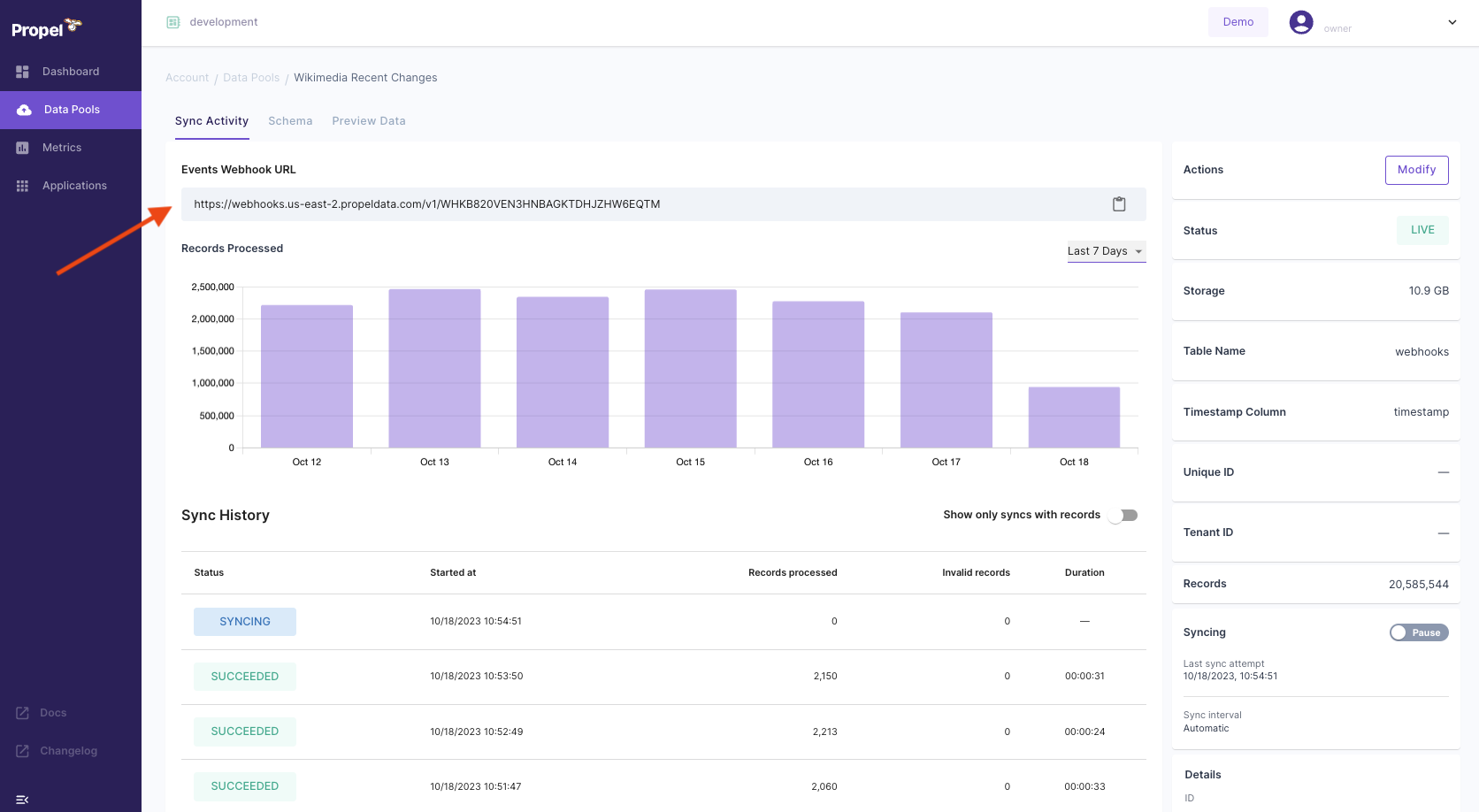

Webhook Data Pools provide an HTTP URL to send events from your applications, streaming infrastructure, or SaaS applications to ingest into Propel.

Features

Webhook Data Pools supports the following features:| Feature name | Supported | Notes |

|---|---|---|

| Event collection | ✅ | Collects events in JSON format. |

| Real-time updates | ✅ | See the Real-time updates section. |

| Real-time deletes | ✅ | See the Real-time deletes section. |

| Batch Delete API | ✅ | See Batch Delete API. |

| Batch Update API | ✅ | See Batch Update API. |

| Bulk insert | ✅ | Up to 500 events per HTTP request. |

| API configurable | ✅ | See API docs. |

| Terraform configurable | ✅ | See Terraform docs. |

How does the Webhook Data Pool work?

Creating a Webhook Data Pool provides you with an HTTP URL for posting JSON events. The posted events are collected in the Data Pool and can be accessed using SQL or the Query APIs.

| Column | Type | Description |

|---|---|---|

_propel_received_at | TIMESTAMP | The timestamp when the event was collected in UTC. |

_propel_payload | JSON | The JSON Payload of the event. |

When creating a Webhook Data Pool, you can flatten top-level or nested JSON keys into specific columns.

Authentication

You can add basic authentication to your HTTP endpoint to secure your URL. If these parameters are not provided, anyone with the URL to your webhook will be able to post events.

We recommend enabling authentication in production.

Sending individual JSON events

Send individual events by POSTing the JSON event in the request body.Sending a batch of JSON events

Send a batch of JSON events by POSTing a JSON array of events in the request body. Each request can have a maximum of 500 events in the array.Errors

- HTTP 429 Too Many Requests - Returned when your application is being rate limited by Propel. Retry with exponential backoff.

- HTTP 413 Content Too Large - Returned if there are more than 500 events in a single request or the payload exceeds 1,048,320 bytes. Fix the request; retrying won’t help.

- HTTP 400 Bad Request - Returned when the schema is incorrect and Propel is rejecting the request. Fix the request; retrying won’t help.

- HTTP 500 Internal Server Error - Returned if something went wrong in Propel. Retry with exponential backoff.

disable_partial_success=true query parameter to make sure that if any event in a batch fails validation, the entire request will fail.

For example: https://webhooks.us-east-2.propeldata.com/v1/WHK00000000000000000000000000?disable_partial_success=true

Schema changes

The Webhook Data Pool is designed to handle semi-structured, schema-less JSON data. This flexibility allows you to add new properties to your payload as needed. The entire payload is always stored in the_propel_payload column.

However, Propel enforces the schema for required fields. If you stop providing data for a required field that was previously unpacked into its own column, Propel will return an error.

Adding Columns

1

Go to the Schema tab

Go to the Data Pool and click the “Schema” tab. Click the “Add Column” button to define the new column.

Click the “Add Column” button to define the new column.

2

Add column

Specify the JSON property to extract, the column name, and the type and click “Add column”.

3

Track progress

After clicking adding the column, an asynchronous operation will begin to add the column to the Data Pool. You can track the progress in the “Operations” tab.

Note that adding a column does not backfill existing rows. To backfill, run a batch update operation.

Column deletions, modifications, and data type changes are not supported as they are breaking changes to the schema. If you need to change the schema, you can create a new Data Pool.

Data Types

The table below shows the default mappings from JSON types to Propel types. You can change these mappings when creating a Webhook Data Pool.| JSON Type | Propel Type |

|---|---|

| String | STRING |

| Number | DOUBLE |

| Object | JSON |

| Array | JSON |

| Boolean | BOOLEAN |

| Null | JSON |

Limits

- Each POST request can include up to 500 events (as a JSON array).

- The payload size can be up to 1 MiB.

Best Practices

- Send as many events per request as possible, up to 500 events or 1,048,320 bytes, to speed up ingestion.

- Retry on 429 (Too Many Requests) and 500 (Internal Server Error) responses with exponential backoff to ensure no events are lost.

- Alert or page on 413 (Content Too Large) errors. This indicates the event count or payload size was exceeded, and retrying will not help.

- Create the Webhook Data Pool with all required fields. Use a sample event and the “Extract top-level fields” feature during the creation process.

- Set the primary timestamp to the correct event timestamp. If not set,

_propel_received_atwill be used by default. - Mark all fields except the primary timestamp as not required to reduce 400 (Bad Request) errors. This allows Propel to accept the request even if some data is missing, and you can backfill the missing data later.

- Alert or page on 400 errors. This indicates a schema issue that needs investigation, as retrying will not help.

- Verify all data types. Ensure objects, arrays, and dictionaries are set as JSON in Propel.

- Enable basic authentication on the Webhook Data Pool to secure the endpoint.

Transforming data

Once your data is in a Webhook Data Pool, you can use Materialized Views to:- Flatten nested JSON into tabular form

- Flatten JSON array into individual rows

- Combine data from multiple source Data Pools through JOINs

- Calculate new derived columns from existing data

- Perform incremental aggregations

- Sort rows with a different sorting key

- Filter out unnecessary data based on conditions

- De-duplicate rows

Frequently Asked Questions

How long does it take for an event to be available via SQL or the API?

How long does it take for an event to be available via SQL or the API?

Once an event is collected, the data will be available in Propel and accessible via SQL or the API within 10 seconds to 2 minutes.